这样是不是更严谨? 否则的话,中断后再次启动时, (第一个)入口地址仍会被添加到队列及写入到文件中. 但是现在有另外一个问题存在,如第一遍全部抓取完毕了(通过spider.getStatus==Stopped判断),休眠24小时,再来抓取(通过递归调用抓取方法). 这时不同于中断后再启动,lineReader==cursor, 于是初始化时队列为空,入口地址又在urls集合中了, 故导致抓取线程马上就结束了.这样的话就没有办法去抓取网站上的新增内容了. 解决方案一: 判断抓取完毕后,紧接着覆盖cursor文件,第二次来抓取时,curosr为0, 于是将urls.txt中的所有url均放入队列中了, 可以通过这些url来发现新增url. 方案二: 对方案一进行优化,方案一虽然可以满足业务要求,但会做很多无用功,如仍会对所有旧target url进行下载,抽取,持久化等操作.而新增的内容一般都会在HelpUrl中, 比如某一页多了一个新帖子,或者多了几页内容. 故第二遍及以后来爬取时可以仅将HelpUrl放入队列中. 希望能给予反馈,我上述理解对不对, 有什么没有考虑到的情况或者有更简单的方案?谢谢! |

||

|---|---|---|

| assets | ||

| en_docs | ||

| webmagic-avalon | ||

| webmagic-core | ||

| webmagic-extension | ||

| webmagic-samples | ||

| webmagic-saxon | ||

| webmagic-scripts | ||

| webmagic-selenium | ||

| zh_docs | ||

| .gitignore | ||

| .travis.yml | ||

| README.md | ||

| pom.xml | ||

| release-note.md | ||

| user-manual.md | ||

| webmagic-avalon.md | ||

README.md

A scalable crawler framework. It covers the whole lifecycle of crawler: downloading, url management, content extraction and persistent. It can simplify the development of a specific crawler.

Features:

- Simple core with high flexibility.

- Simple API for html extracting.

- Annotation with POJO to customize a crawler, no configuration.

- Multi-thread and Distribution support.

- Easy to be integrated.

Install:

Add dependencies to your pom.xml:

<dependency>

<groupId>us.codecraft</groupId>

<artifactId>webmagic-core</artifactId>

<version>0.5.2</version>

</dependency>

<dependency>

<groupId>us.codecraft</groupId>

<artifactId>webmagic-extension</artifactId>

<version>0.5.2</version>

</dependency>

WebMagic use slf4j with slf4j-log4j12 implementation. If you customized your slf4j implementation, please exclude slf4j-log4j12.

<exclusions>

<exclusion>

<groupId>org.slf4j</groupId>

<artifactId>slf4j-log4j12</artifactId>

</exclusion>

</exclusions>

Get Started:

First crawler:

Write a class implements PageProcessor. For example, I wrote a crawler of github repository infomation.

public class GithubRepoPageProcessor implements PageProcessor {

private Site site = Site.me().setRetryTimes(3).setSleepTime(1000);

@Override

public void process(Page page) {

page.addTargetRequests(page.getHtml().links().regex("(https://github\\.com/\\w+/\\w+)").all());

page.putField("author", page.getUrl().regex("https://github\\.com/(\\w+)/.*").toString());

page.putField("name", page.getHtml().xpath("//h1[@class='entry-title public']/strong/a/text()").toString());

if (page.getResultItems().get("name")==null){

//skip this page

page.setSkip(true);

}

page.putField("readme", page.getHtml().xpath("//div[@id='readme']/tidyText()"));

}

@Override

public Site getSite() {

return site;

}

public static void main(String[] args) {

Spider.create(new GithubRepoPageProcessor()).addUrl("https://github.com/code4craft").thread(5).run();

}

}

-

page.addTargetRequests(links)Add urls for crawling.

You can also use annotation way:

@TargetUrl("https://github.com/\\w+/\\w+")

@HelpUrl("https://github.com/\\w+")

public class GithubRepo {

@ExtractBy(value = "//h1[@class='entry-title public']/strong/a/text()", notNull = true)

private String name;

@ExtractByUrl("https://github\\.com/(\\w+)/.*")

private String author;

@ExtractBy("//div[@id='readme']/tidyText()")

private String readme;

public static void main(String[] args) {

OOSpider.create(Site.me().setSleepTime(1000)

, new ConsolePageModelPipeline(), GithubRepo.class)

.addUrl("https://github.com/code4craft").thread(5).run();

}

}

Docs and samples:

Documents: http://webmagic.io/docs/

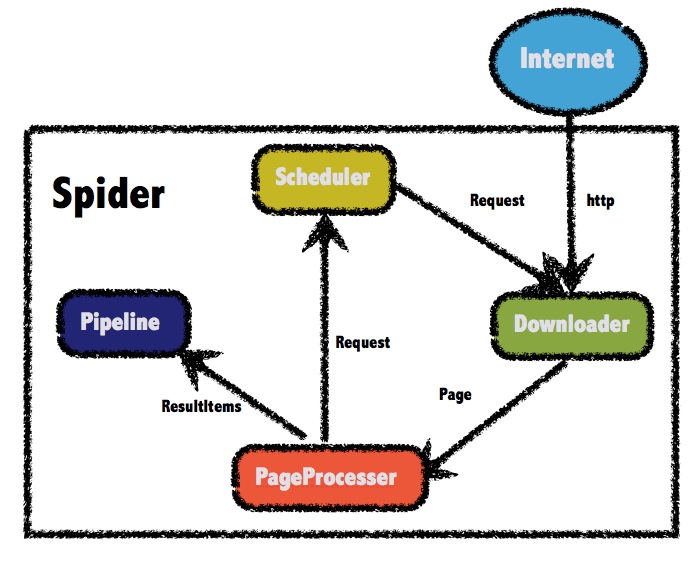

The architecture of webmagic (refered to Scrapy)

Javadocs: http://code4craft.github.io/webmagic/docs/en/

There are some samples in webmagic-samples package.

Lisence:

Lisenced under Apache 2.0 lisence

Contributors:

Thanks these people for commiting source code, reporting bugs or suggesting for new feature:

- ccliangbo

- yuany

- yxssfxwzy

- linkerlin

- d0ngw

- xuchaoo

- supermicah

- SimpleExpress

- aruanruan

- l1z2g9

- zhegexiaohuozi

- ywooer

- yyw258520

- perfecking

- lidongyang

- seveniu

- sebastian1118

- codev777

- fengwuze

Thanks:

To write webmagic, I refered to the projects below :

-

Scrapy

A crawler framework in Python.

-

Spiderman

Another crawler framework in Java.

Mail-list:

https://groups.google.com/forum/#!forum/webmagic-java

http://list.qq.com/cgi-bin/qf_invite?id=023a01f505246785f77c5a5a9aff4e57ab20fcdde871e988

QQ Group: 373225642